Power Through Chaos

A rational reason for irrational technology designs.

-

Tools:

All of us have a nerd in our lives - the cousin, brother-in-law, or son who helps us beat back the Blue Screen of Death or whatever latest glitch infests our smartphone. While the younger generation is fairly familiar with how to handle personal electronics, for most people it's basically magic that works when you produce the right incantation.

There is, of course, a vast underlying reality of multifarious pieces of technology that allow our modern interconnected world of infinite communications to function. Decades ago, a handful of guys in a garage could change the world.

It's not like that anymore - 99% of the time, it takes a large team of experts in different disciplines to make a new technology work. Once upon a time, software was like a book in that one guy could write it, or maybe a small team. Nowadays it's like writing an encyclopedia: there are hundreds of people involved, most of whom never stray from their own particular specialty.

As vital as information technology is to our world, you'd think they'd all be pretty well paid. By national standards, they are; but by the standards of the past, current IT salaries are nothing like as large as they once were.

Not a day goes by that a newspaper doesn't report on the reason why: we are now in competition with everyone else in the world, from Africa to India to China, and even against Africans and Indians and Chinese right here at home. This drives down wages just as Adam Smith would predict.

So what's a well-educated techie who likes his Mercedes to do, when there's some other fellow with an accent who'll do the same work for half the price?

Complexity By Design

It turns out that there's a pretty good answer. Most of us have found computer programs frustrating from time to time; they get better slowly over the years, but can still be surprisingly opaque and unreliable.

What you may not realise is that you are getting the best of user interface design. Remember the old command prompt of MS-DOS from your school days? Most of the world's Internet servers use an interface that looks almost the same. It's UNIX instead DOS and the commands offered are vastly more powerful than any IBM-PC, but from the point of view of usability it's just as unintelligible to the uninitiated.

A reasonably intelligent person can, sort of, figure out how to use Microsoft Windows and Office just by poking around at the buttons on the screen. Nobody can figure out anything by randomly poking at a command prompt; how would you know what commands to enter or what they might do? You have to actively learn the commands. You must take a course, be taught by somebody else, or do a whole lot of Googling.

This creates a premium on knowledge. It is not possible to hire just anybody off the street to run a server farm; to do anything effective, system administrators must have training and experience. This, naturally, lets them demand more money, and makes it harder and riskier to replace them with cheaper people.

The result is what the cynical would expect: inside the tech world, there seems to be a positive glee in making essential software as difficult to use as possible. Let's consider just one example among many.

When you have a large team working on the same piece of software, it's impossible to keep everybody from stepping on each other's work without using a complex piece of support software called a version control system. This keeps track of all the changes everyone is making, so you can spread the work around or rip changes out easily if they're buggy.

By its nature, version control software is going to be a complicated affair. The currently most popular version control system, an open-source product called "Git," laughs at this problem: it is so opaque that computer scientists get into shouting matches over how to execute the most simple of functions. This amusing article "10 things I hate about Git" gives you some idea of the problem.

Please remember, all the commenters breathing fire over the frustrations of using Git are well-paid software professionals who, in general, know what they are doing - or at least, they know enough to convince their employers that they know what they're doing. None of them are "normal" people; all spent all day every day in front of a glowing screen. Yet, they're confounded on a daily basis by obscurantist Git.

Why is this tolerated? Aren't there any software developers smart enough and annoyed enough to come up with something easier to use?

Monopolies of Knowledge

Sure there are, and in fact there are other version control systems that are reputed to be far easier to use. But very few projects use them. Why is this?

Consider how a new commercial software project is started. Some executive with money to invest has an idea and hires a chief software architect to make it happen. This top-flight nerd is the guy who knows how to "do software" - that's why the executive hired him. He's an expert, so naturally the business people follow his plans, within reason.

Is it in the chief software architect's best interest to design the software using tools that anybody can use? Of course not! It's vastly more prudent to use tools that only very smart people can understand and which routinely require his personal knowledge to unravel. If the business executives cannot do without him for more than the week of his annual vacation, they're unlikely to dare laying him off to replace him with someone in Bangalore.

Of course, normal people have to use the resulting software, so a halfway friendly interface is important to maintain sales. Under the hood, though, the messier the better for all involved, except for the next generation who'll have to somehow figure it out to improve it.

That's their problem, of course. For today's paycheck, chaos is power, and being an initiate in some obscure but essential black art - especially one made artificially and needlessly complex - is the royal road to a comfortable retirement.

-

Tools:

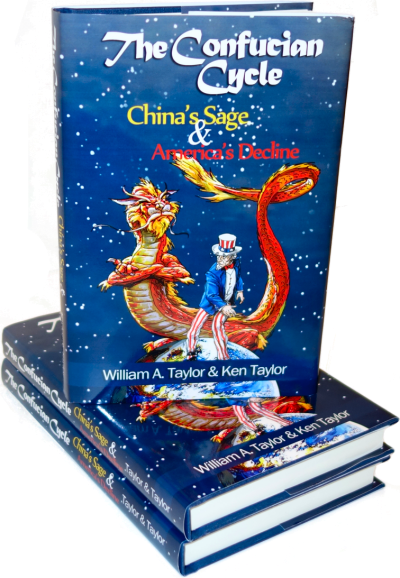

What does Chinese history have to teach America that Joe Biden doesn't know?

As one of those nerds, I have to disagree with the conclusion here. It's easy to understand how non-nerds would think that chaos or confusion is the point, but it's really not. Nerds are probably the least political people on earth. Their actions are the typically the result of sincere belief and experimentation. Very little has to do with job preservation or the like.

Products like Git really are better than their competitors in a lot of ways. The UIs may be behind and the learning curves are sometimes steep but they typically answer a problem that the others don't.

Git, for instance, solves the problem of distributed branch/merge conflicts for software teams that do a lot of parallel iterating. Before Mr. Torvalds created Git, SVN and Mercurial were the go-tos and they left a lot to be desired unless the software team followed a very linear waterfall progression.

I think the problem with chaos and confusion stems mostly from a lack of talent on most software teams. It used to be that software teams were only staffed with REAL nerds (ie. pocket-protector wearing textbook-studying academics). they lived and breathed code in a pre-internet world where that knowledge was VERY difficult to collect and disseminate.

In the modern day, codes is everywhere and code is easy. Tookits, SDKs, how-to blogs, fancy IDEs, component integration from central servers (like Nuget) makes it so even non-nerds can pretend to be nerds and make a good living. Heck, you can write code and deploy it in the cloud without even installing or running a local compiler on your machine!

But then you get teams of people where 80% of the team is just that - a script kiddie - and the other 20% knows how to really debug problems and deal with different feature branches. The 80% struggles to understand and, typically, defers all that to the 20% guys.

Technology is probably the single truest meritocracy left on earth. Software that becomes popular is almost always popular for a valid reason. It's hard to get nerds to bite on something simply because it has bright colors and clever marketing. If it doesn't actually work better, scratch an itch or fix a problem, it's likely not to get much market penetration in the long run. A lot of the complicated products you're referring to really do have valid purposes behind their popularity other than a cynical political move to preserve jobs.

There are also advantages to the command prompt style. It is far faster than any GUI. Sure if you have good keyboard short cuts (something that is rapidly disappearing from new software) you can get about the same speed but having a GUI makes people not want to take the time to learn them when they do exist. I am a faster than many of my coworkers simply because I know and use many of the microsoft keyboard short cuts. If were we using a dos based system everyone would be using them.

More importantly from a programming standpoint, many of the people that write the code are interested in what they do, taking GIT the original person or team that wrote that was interested in version control. That's what they love. As such they wrote about that. UI is generally not the most entertaining part to program. I hate working on UIs ... so I tend not to. I want to write whatever it is that I'm trying to create, a more useful loan calculator, AI for a video game, I don't really want to spend time programming, entirely boring in my opinion, UI.

Wow, this article is horribly cynical and wrong, even by Scragged's standards.

We have been called cynics before, Jason. What do you think of this?

http://www.scragged.com/articles/cynicism-and-teh-confucian-cycle

Putting evil intentions behind every decision not understood truly reveals it's folly when applied to a topic where one has such apparent limited understanding.

Intent doesn't matter, Jason, what matters is outcome. For example, President Johnson's Great Society promised to lift people out of poverty. Instead, it's trapped fatherless kids in Democrat-run cities with failing schools, no jobs, and no hope.

Even the New York Times admits that this isn't for lack of money. The problem is that their programs simply don't work, and to continue them unchanged is evil. That's how we're burning down our cities

Your point has no bearing on mine.