Brain-Dead Mixing of Science and Politics

Government funding has destroyed science.

-

Tools:

In "Trawling the Brain," Science News reports the latest instance of peer-reviewed scientific gimmicry:

The 18-inch-long Atlantic salmon lay perfectly still for its brain scan. Emotional pictures -a triumphant young girl just out of a somersault, a distressed waiter who had just dropped a plate - flashed in front of the fish as a scientist read the standard instruction script aloud. The hulking machine clunked and whirred, capturing minute changes in the salmon's brain as it assessed the images. Millions of data points capturing the fluctuations in brain activity streamed into a powerful computer, which performed herculean number crunching, sorting out which data to pay attention to and which to ignore. [emphasis added]

By the end of the experiment, neuroscientist Craig Bennett and his colleagues at Dartmouth College could clearly discern in the scan of the salmon's brain a beautiful, red-hot area of activity that lit up during emotional scenes.

An Atlantic salmon that responded to human emotions would have been an astounding discovery, guaranteeing publication in a top-tier journal and a life of scientific glory for the researchers. Except for one thing. The fish was dead.

|

|

| "Put me back in the MRI..." |

|---|

fMRI, the research technique under investigation, uses high-powered computers to create real-time, animated images showing areas of high activity in a brain. Scientists using fMRI claim to have found regions of the brain which are responsible for tennis, fairness, and much else. The CIA sponsored fMRI research because it would be handy to isolate the regions of the brain which are responsible for, say, Islamic terrorist activity.

The difficulty is that each experimenter has been free to choose exactly how to analyze the data and they can change their methods of analysis at any time. If you take any large data set and analyze it any way you like, you can always find seemingly-significant results. That's pretty much what pyramidologists do when analyzing the dimensions of the Great Pyramid and predicting past events such as the departure of the Jews from Egypt.

I tend to agree with a number of pyramid and pyramidology authors who say that if you look at the sum total of all of the possible symbolisms encoded in the Great Pyramid, and that even if only 10% of this information was truly intended (and you pick any 10% subset), then even this 10% is beyond a coincidence! [emphasis added]

As pyramidologists have found, being able to choose which data to publish after collecting them allows you to reach pretty much any conclusion you desire. The only way to validate any given way of analyzing data is for other scientists to repeat the same analysis on a different set of data from different people who're following the same instruction script and see if the new data gives the same brain clusters. That's called "repeatability"; analysis which can be repeated is called "replicable".

For good reason, repeatability is one of the core requirements of science; pyramidology is by definition not science because the Pharaohs did not trouble to leave us their engineering drawings, magic spells, or contact info for space aliens, so what they did can't be repeated. Unfortunately, previously vaunted brain-scan results seem to fall into the same nether world of pseudoscience:

"It's a dirty little secret in our field that many of the published findings are unlikely to replicate," says neuroscientist Nancy Kanwisher of MIT. [emphasis added]

When the experimenters analyzed the data collected from the deceased salmon's brain and included checks to keep random fluctuations from seeming significant, the data showed no brain activity at all. Brain activity in the dead fish was purely an artifact of manipulative mathematics - that, or proof of life after piscine death, an even more revolutionary discovery.

Computer-Based Imaging

Computers have been creating valuable images from large data sets for many years so there's nothing fundamentally wrong with the ideas behind fMRI. Computed axial tomography, or CAT scans, are made by passing a beam of radiation through the body at many different angles. Once the amount of radiation that comes through at each angle is known, a computer analyzes the data to determine how body tissues such as bone, blood, and various cells must be located to match the measurements.

The idea originated in 1967 and the first brain scan was made in 1972. The inventors shared the Nobel prize for medicine in 1979.

CAT scans and Magnetic Resonance Imaging (MRI), a more advanced form of medical imaging, have two characteristics that avoid the difficulties being reported with fMRI:

The same software is used for all patients who pass through a given machine. This makes the results replicable.

When patients are cut open during surgery, doctors are inclined to complain vehemently about any errors in the images. This provides detailed, ongoing software verification.

There's no such feedback with fMRI, of course, because we can't cut open a brain and see if the regions fMRI says are active are in fact active.

As computers have become larger and more powerful, researchers are able to analyze data in more and more complicated ways. Pitfalls arise as the analysis gets too complicated:

"It's hellishly complicated, this data analysis," says Hal Pashler, a psychologist at the University of California, San Diego. "And that creates great opportunity for inadvertent mischief."

Making millions, often billions, of comparisons can skew the numbers enough to make random fluctuations seem interesting, as with the dead salmon. The point of the salmon study, Bennett says, was to point out how easy it is to get bogus results without the appropriate checks. [emphasis added]

Tom Siegfried, Editor in Chief of Science News, said:

Many experts in neuroscience and statistics have pointed out flaws in the basic assumptions and methods of fMRI - problems that render many of its findings flat-out wrong. ... And it's the responsibility of those who cover science news to make sure such criticisms are not ignored when assessing what should be communicated from the research frontiers. [emphasis added]

Unfortunately, replicating a study by collecting data from a second set of people and doing the same mathematical analysis as with the first group doubles the cost of the research. The peer review process is supposed to catch this sort of error, but when the analysis gets too complicated, peers may not be able to follow it or may not have time to verify the calculations.

"Statistics should support common sense," she [Nikolaus Kriegeskorte, Medical Research Council, Cambridge, England] says. "If the math is so complicated that you don't understand it, do something else."

The Perils of Large Data Sets

The complexities of analyzing large data sets offer "great opportunity for inadvertent mischief" and offer "to make random fluctuations seem interesting." The only way to guard against such errors is to repeat earlier experiments and check, but that's not exciting enough to interest scientists and funding agencies don't want to risk having previous results undermined. This makes it difficult to get at the truth.

Getting the truth becomes even more difficult when there's serious amounts of money involved. Climate scientists have been criticized for destroying data, faking some of the analysis, and for exercising political power to distort the supposedly sacrosanct peer review process to suppress papers which disagreed with them. It doesn't matter whether the distortions of the funding process and attacks on critics by the pro-global-warming crowd were deliberate fraud or not, the problem is that scientists don't seem to be willing to take the time to weed out the errors in their body of peer-reviewed publications when billions of dollars are involved.

Science News pointed that 28 out or 53 fMRI papers were statistically flawed. They don't speak to the issue of why the peer review process didn't question these bogus papers, but with nonsense results so widespread in a field where inadvertent mischief is so easy to find, we'd expect that there'd be some deliberate mischief in other fields which are based on complex analysis of large amounts of data. Climate models come to mind. The editor of Science News points out that journalists have a duty to air opposing views of science, but that doesn't always happen.

The Manipulation of Peer Review

Research scientists aren't the only ones who try to manipulate the review process. The New York Times reports that the Lancet published a study showing that for the first time in decades there's been a worldwide decline in maternal deaths during childbirth: from 526,300 in 1980 to about 342,900 in 2008.

But some advocates for women's health tried to pressure The Lancet into delaying publication of the new findings, fearing that good news would detract from the urgency of their cause, Dr. Horton [editor of the Lancet] said in a telephone interview. "I think this is one of those instances when science and advocacy can conflict," he said.

Dr. Horton said the advocates, whom he declined to name, wanted the new information held and released only after certain meetings about maternal and child health had already taken place. [emphasis added]

Researchers from Harvard, the World Bank, the World Health Organization, and Johns Hopkins estimated 535,900 such deaths in 2005 which is 50% more than the number found by the recent study. Advocates planned to ask the US State Department, the Pacific Health Summit, and the United Nations for more money and worried that reports that the problem was being solved might reduce their budgets. Having your problem shrink 50% isn't good for your budget, so advocates wanted the report delayed.

The newer study, which collected a great deal more data than previous studies, was paid for by the Bill and Melinda Gates foundation. The Gates Foundation is famous for hard-nosed problem analysis whenever anyone seeks funding. They generally require a study before the program starts and a follow-up study by an independent organization to see if the program they paid for made any difference.

Gates Foundation programs that don't show verifiable progress get their funding cut. Government-funded projects, in contrast, tend to go on forever regardless of progress; the agencies work to make the problem seem bigger every year as justification for more money. It's no surprise that Harvard and the other government-funded institutions found the problem of death in childbirth much bigger than it appeared to the privately-funded Gates investigators.

Dr. Horton, who edits the Lancet, had a different view of the Gates report:

Dr. Horton contended that the new data should encourage politicians to spend more on pregnancy-related health matters. The data dispelled the belief that the statistics had been stuck in one dismal place for decades, he said. So money allocated to women's health is actually accomplishing something, he said, and governments are not throwing good money after bad. [as opposed to most other government programs which do throw good money after bad - ed][emphasis added]

Women's health advocates don't seem to want the problem to be finally solved - they very much want to be paid to attend conferences where they can bleat about it and make each other feel good by expressing concern. They clearly disagree with Dr. Horton and believe they'll be able to get more money if the problem doesn't seem to be approaching a solution.

Similarly, climate scientists tried to manipulate the peer review process to silence their critics because they'd get more money if they could convince everyone that the earth was getting warmer and that we had to spend billions of taxpayer dollars to do something about it.

Government Put Politics into Research

Government funding poisons everything it touches by making politics more important than accomplishment; the Gates Foundation collecting actual data upset groups who want to keep government money flowing from us to them.

Instead of curing poverty, the welfare system encourages welfare mothers to have more children than working mothers; this multiplies the problem and lets them ask for more money every year. To keep poverty money flowing, the government keeps changing the definition of poverty whenever it seems that budgets might be cut. A rich man who funded Handel gave us The Messiah; government funding of the arts gave us a crucifix immersed in urine.

Our science agencies have become so obsessed with issues such as gender balance that they're no longer funding cutting-edge scientific research. "After science: Has the tradition been broken?" argues that:

In their innocence of scientific culture, the younger generation of scientists are like children who have been raised by wolves; they do not talk science but spout bureaucratic procedures. It has now become accepted among the mass of professional 'scientists' that the decisions which matter most in science are those imposed upon science by outside forces: for example by employers, funders, publishers, regulators, and the law courts. It is these bureaucratic mechanisms that now constitute the 'bottom line' for scientific practice.

The article "Strategies for Nurturing Science's Next Generation" from Science News of June 20, 2008 has the usual complaints about not enough funding which we've learned to expect from all interest groups who feed at the public trough, but also lists a substantive issue with respect to innovation:

As research funds get tighter, review panels shy away from high-risk, high-reward research, and investigators adapt by proposing work that's safely in the "can-do" category. The clear danger is that potentially transformative research - that which has a chance to disrupt current complacency, connect disciplines in new ways or change the entire direction of a field, but at the same time incurs the very real possibility of failure - finds scant support. [emphasis added]

Academics don't appreciate having their careers disrupted by "transformative research" any more than businesses appreciate being disrupted by new competitors; reviewers don't want anything funded that would question whatever papers qualified them for tenure. Bureaucratic conservatism coupled with conservative "peer review" means that no cutting-edge research will be funded. That's one of the beauties of fMRI research - since you get to choose how to analyze the data after the fact, you can nearly always show something to justify your funding, just like the pyramidologists.

So what's a nation that needs innovation to drive economic progress to do? Private individuals have funded prizes; the X-Prize Foundation offers rewards for people who get to orbit, land on the moon, reach certain goals in genomics, etc. This seems to work.

Current research funding, like most government activity, is driven by politics, jealousies, conservatism, and short-sightedness. Government could get some actual value for its research dollars by setting goals or offering prizes, but as Sen. Proxmire noted when handing out "Golden Fleece" awards for wasting taxpayer's money, most of what we spend on research goes right down the old rat hole.

Golden Fleeces continue to multiply along with the deficit. Where's Senator Proxmire when we need him?

Unfortunately, he's dead. It would take tricky math to detect any activity in his cranium - but we're sure that government-funded fMRI researchers are are up to the job.

-

Tools:

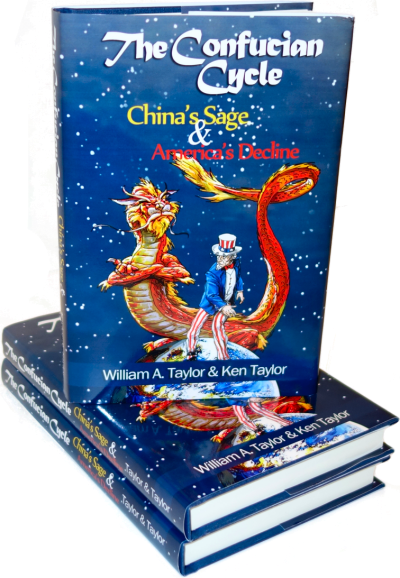

What does Chinese history have to teach America that Mr. Trump's cabinet doesn't know?

http://discovermagazine.com/2010/jan-feb/18

which began:

Magnetic resonance imaging, or MRI, has become a powerful tool for evaluating brain anatomy, but a newer incarnation of the technology called fMRI (the f stands for functional) can probe even more deeply. In studies published over the past year, neuroscientists have shown that fMRI can peel away the secrets of emotion and thought; in fact, some of their findings are almost like mind reading.

Using fMRI, New York University neuroscientist Elizabeth Phelps has identified two brain regions-the amygdala and the posterior cingulate cortex, associated with emotional learning and decision making-that are crucial in forming first impressions. "Even when we only briefly encounter others, these regions are activated," Phelps says.

One suspects that they hadn't read about the dead fish.

http://www.sciencenews.org/view/generic/id/58945/title/Atrazine_paper%E2%80%99s_challenge_Who%E2%80%99s_responsible_for_accuracy%3F

Buried within a new paper discussing conflict-of-interest issues is an intriguing little case study. It looks at risks to wildlife from atrazine - a widely used herbicide - as assessed by a massive peer-reviewed analysis of published data. It charges that this analysis "misrepresented over 50 studies and had 122 inaccurate and 22 misleading statements."

Strong charges, and worth investigating. But after talking to the lead authors of the review paper and its critique, I come away with a suspicion that the real take-home message here is about something quite different: publishing's ability to vet massive quantities of scientific information. The issue emerges in an examination of a 2008 paper in Critical Reviews in Toxicology. "Based on a weight of evidence analysis of all of the data," it concluded, "the central theory that environmentally relevant concentrations of atrazine affect reproduction and/or reproductive development in fish, amphibians, and reptiles is not supported by the vast majority of observations." For many other potential toxic endpoints, it said, "there is such a paucity of good data that definitive conclusions cannot be made."

As part of a paper released early online, ahead of print, in Conservation Letters, Jason Rohr and Krista McCoy of the University of South Florida, in Tampa, critiqued the accuracy of that 2008 review. In doing so, they say they uncovered "an important contemporary example of a conflict of interest resulting in a potential illusion of environmental safety that could influence [regulation]."

Alleged bias

That conflict of interest, they charge, stems from the fact that the review "and the research of many of its authors were indirectly or directly funded by the company that produces the herbicide, Syngenta Crop Protection."

Ecologist Keith Solomon of the University of Guelph in Ontario, Canada, agrees that any review of such a high-volume chemical needs to be credible and scientific. He's also the lead author of the disputed review - and argues that receiving industry funds doesn't de facto render his paper's conclusions biased or bogus. He argues that the value of research should be measured simply by how it was conducted and interpreted.

Which is true - except that plenty of studies have identified trends showing that organizations with a vested financial interest in a particular outcome often disproportionately report findings advantageous to their sponsors.

Rohr and McCoy claim to have identified just such a trend in the Solomon et al review of atrazine data. Of 122 alleged mistakes that the pair tallied, all but five would have benefited Syngenta and the safety assessment of atrazine, they say. And among the five outliers, only one mistake would work against Syngenta's interests; the rest are neutral. All 22 misleading statements, they say, come out in Syngenta's favor.

Dueling charges

Rohr, an ecologist, studies amphibians and possible threats posed to them from environmental agents and other factors. So he was familiar with what his colleagues had been publishing over the years about atrazine when the Solomon et al paper came out. And as he read the review, certain statements raised an eyebrow. They didn't seem to reflect what he'd remembered the cited papers as having concluded.

Others things in the review just angered him. Like when it discounted three of his team's papers for not having quantified the actual concentrations of atrazine to which his frogs were exposed. "In fact, we did report those actual concentrations," Rohr says.

Such issues prompted Rohr and McCoy to conduct their own meta-analysis of data investigating atrazine's impacts on wildlife. The study was reported earlier this year in Environmental Health Perspectives - and reported a host of apparently deleterious impacts attributable to the herbicide.

While working on this analysis, they probed the Solomon et al review in detail. It took "a very long time," Rohr says. And turned up some surprises. Like a claim in one cited paper that atrazine caused no potentially deleterious changes at exposures below 100 parts per billion. "We went back to the study and found effects started at just 10 ppb," Rohr says.

In another instance, the review claimed that a cited study had reported the survivorship of frogs in a tank with no atrazine as 15 percent. "In fact, it was 85 percent," Rohr found.

The USF pair documents its criticisms in a 45-page online supplement.

"A lot of these were the kinds of comments you might get from reviewers," Solomon says. "And there are some that may actually be reasonable."

Indeed, he adds, it's expected that "in scientific discourse, everybody critically evaluates what everybody else does and says." A paper offers hypotheses or conclusions and then looks to see if someone can shoot them down. "That's what science is all about," Solomon says.

Talk to him long enough and you'll see he has his own laundry list of critiques about the Conservation Letters analysis. Some challenged portions of his paper's text were taken out of context, he claims - and wouldn't look fishy if read in their entirety. (He pointed, for instance, to a complaint that one passage in his group's review didn't cover all of the toxicological data in one area. "In fact," he says, "the preceding statement says this material was reviewed in a book published in 2005. We summarized its information, but never said it was supposed to be all-inclusive.")

Solomon also charges that Rohr and McCoy "have a fundamentally different approach to how they look at the toxicology data. We applied guidelines for causality" used in much of biology, Solomon argues. They involve things like: "Is there consistency across several studies? Is there a plausible mechanism of action? Is there a concentration response?"

By contrast, he says, what Rohr's team does "is look for any difference between some exposure and the control. And is it statistically significant?" Sometimes these purported atrazine effects showed no dose response or ran counter to what's known about the biology of the animal, Solomon says.

Rohr, as might be expected, challenges Solomon's assessment.

Bottom line: These teams should duke it out in the literature and let their colleagues weigh in on the strengths of their respective arguments.

Reviews: a special case?

The Solomon et al review ultimately ran 50 pages in its published form and by my rough count cited at least 194 separate papers, five meeting abstracts, four industry documents, a master's thesis, four government review documents, a conference synopsis and portions of about a dozen books. Can we honestly expect that errors won't slip through when authors attempt to manage boatloads of data? And if they do, who's to catch them?

Peer reviewers?

Keep in mind that peer review is a volunteer enterprise. No one gets paid. So there is little incentive for a reviewer to spend weeks or more anonymously ferreting out potential errors from a gargantuan manuscript. His or her task should be finding egregious errors and leaving the small stuff to a journal's staff.

The problem: Many journals have little more than copyeditors on staff, people to make sure that style is consistent in the presentation of data and that the text's grammar is correct.

At the International Congress on Peer Review and Biomedical Publication, last September, several journals noted they not only had staff to perform a detailed checking of facts, but also in-house statisticians to ensure the appropriate tests of significance were used and reported. These tended, not surprisingly, to be the bigger, wealthier journals. An oxymoron in environmental science.

Owing to the importance of good literature reviews, perhaps humongous enterprises like the one by Solomon's group shouldn't be published in journals where nitpicky fact checking is not the rule. After all, whether 10 versus 100 ppb concentrations of a toxic chemical provoke harm could prove pivotal to regulators looking to evaluate the safety of a product or procedure.

Sure, fact checking takes time and costs money. But look at all of the resources wasted when good science is misrepresented in the court of public policy. We should view these costs as societal investments to make sure that the science on which we depend is reported - and ultimately employed - truthfully.