No Apple for the FBI

Apple is absolutely right in refusing to create a phone-cracker for the FBI.

-

Tools:

The American government has been putting great pressure on our technology companies to build "back doors" into their software so that the Government can decrypt our private data. This ability would be used sparingly, only when fighting serious crime, and only with a judge's approval, of course.

Of course.

The government has been looking for a test case to push their position through the courts. They recently were given a cell phone which had been used by one of the Islamic terrorists who engaged in "workplace violence" in San Bernardino last year. The phone is encrypted. The FBI can't read the data in it, so they want to court to ask Apple to break into the phone, just this once. Yep, just this once, and never again.

This is part of Apple's response, which was written by CEO Tim Cook:

We have great respect for the professionals at the FBI, and we believe their intentions are good. Up to this point, we have done everything that is both within our power and within the law to help them. But now the U.S. government has asked us for something we simply do not have, and something we consider too dangerous to create. They have asked us to build a back door to the iPhone.

Specifically, the FBI wants us to make a new version of the iPhone operating system, circumventing several important security features, and install it on an iPhone recovered during the investigation. In the wrong hands, this software - which does not exist today - would have the potential to unlock any iPhone in someone's physical possession.

The FBI may use different words to describe this tool, but make no mistake: Building a version of iOS that bypasses security in this way would undeniably create a backdoor. And while the government may argue that its use would be limited to this case, there is no way to guarantee such control. [emphasis added]

The essential, vital, unarguable point is that this hacking tool does not exist today. - and, given its immense capacity for mayhem, is a tool that the world would be better off if it never exists at all.

Mr. Cook doubtless remembers how successful generations of American Presidents have been in putting the nuclear genie back in the bottle. Instead of being limited to a few governments, nuclear weapons capability is spreading to more and more states whose motives are difficult to discern, and it's not hard to imagine a time soon when non-state actors will get their dirty mitts on one.

This, despite the fact that nuclear weapons are very hard to build! Although their existence and their basic operating principles have been known for decades, the actual engineering and manufacture of a working nuclear weapon takes many years and billions of dollars.

A software back door would be completely unlike nuclear weapons, because it wouldn't have the protection of difficulty in replication. As Napster and its countless clones have proven, once created, a digital work can be copied as easily and as universally as a web page.

It would be one thing for a safe manufacturer to turn over the combination to one specific safe under court order. Apple has done that in response to subpoenas, as it should.

It would be quite another thing for a safe manufacturer to first build, and then give the government, a device that would open any safe in the world. Yet that's what the government wants of Apple.

Would gun owners accept the FBI having a tool that could prevent any Smith & Wesson handgun from firing? Or would the company go bankrupt overnight?

How could anyone protect such a desirable secret? How much could you get for a tool which could open any iPhone in the world? How could anyone, no matter how virtuously inclined, guarantee that he would resist the temptation to sell it - particularly considering that it doesn't even need to be stolen, a simple software copy will do?

Once out, of course, that's it for any hope of protecting your private information if you lose your phone. But it's actually worse than that: hackers are very skilled at reverse engineering of software to learn the existence of vulnerabilities.

What guarantee is there that a clever hacker couldn't analyze Apple's super-cracker software and figure out a way to crack into an Apple device without needing physical possession of it? Mr. Cook doesn't want his engineers figuring out how to do this, or even thinking about it. Neither do we.

Our concern is especially valid when government holds the key. Our government in general, and the Obama administration in particular, has given ample evidence that it sees no obligation to follow the law and that it is incapable of protecting important data.

When encryption is outlawed, only outlaws will have encryption - and when everyone is an outlaw because that's the only way to protect yourself, well - does that mean nobody is an outlaw? Do we really want to find out?

-

Tools:

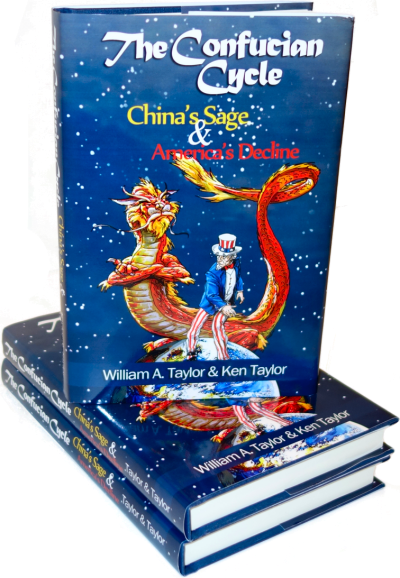

What does Chinese history have to teach America that Mr. Trump's cabinet doesn't know?

Seems to me that Apple could do everything that the FBI wants, without actually giving the FBI the software. The FBI could be required to simply witness Apple obtaining the data. The software should never be allowed either out of Apples sight or custody.

Aside from that, Apple is 100% correct to proceed as they are. The Feds cannot be trusted. And law enforcement, to include the FBI, was able to solve crimes before cellphones ever existed. Why can't they solve the crimes now?

Not knowing exactly what happened for "missing" 18 minutes is a small matter as compared to the loss of privacy for the millions of others. ANd, the FBI used to be able to identify associates via non-cellphone data prior to this. They are just being lazy, acting liberal.

The FBI does not want to give the phone to Apple, they want Apple to give them the software and trust that they will not misuse it.

Even if Apple wasn't going to give it to the feds, how can Mr. Cook guarantee that none of his employees could be tempted? He doesn't want his company to build this nuclear bomb or even think about building it.

"But, but… But, terrorism!!"

Yes, but, had our government actually been doing what it is supposed to do, that being looking out for our interests above its own and above the interests of foreigners, and had barred entry to these useless barbarians on 9/12, if not before, we wouldn't need to have this conversation.

This would a good moment for Apple and Google to announce total end-to-end encryption relying on public/private key pairs that each user generates on their own (similar to how Dropbox and other products do it).

That would prevent Apple or Google from being *able* to crack it and give them 100% plausible deniability.

The conversation would go like this:

FBI: Evil person did yada yada... Here's the device, please crack! I'll wait in the lobby.

Apple: Can't do it.

FBI: You have to! I'm the government! Terrorism, terrorism, rabble, rabble

Apple: No, you misunderstand. I literally CAN'T do it. We don't have an way of decrypting it. You'd have to find the keys from the person who purchased the phone.

FBI: Waaaaa!

Apple: Perhaps you should borrow some time from your friends at the NSA with the big super computers? They could brute force it. At current computing levels, they should have it ready for you in about 2 years.

FBI: I'll show myself out.

Being that Apple has that kind of security on their phones I am considering on getting an iPhone.

Just imagine if Hillary had a copy of that back-door software on her 'private' server :)

Oh... by the way, why doesn't the Dept of Justice go after Hillary with the same urgency or vigor…

just askin'

@RS Douglass

Democrats are very seldom prosecuted no matter what they do. Our earlier article, "The Financial Importance of Being A Democrat" at http://www.scragged.com/articles/the-financial-importance-of-being-a-democrat lists many Democrat crooks who weren't prosecuted and it doesn't even mention Hillary!

Indict Hillary first before the FBI seeks an indictment against Apple! Her indictment is a sure thing!

@J Hudgins - That would be difficult - Mr. Obama certainly forwarded classified information to her insecure server. He and the top state department brass are as guilty as she.

I think they should all get orange jump suits, but I suspect that Mr. Obama would beg to differ.

The key words in Apples response is that the phone would have to be in their physical position to load the software, because it cannot be loaded by the normal process (because you have to know the password to do that...)

So, if the phone is in your physical possession, and you can break it enough to load software at the hardware level, you can also read data at the hardware level. So why do they need the software if they can read the data? Once they have the data, a brute force attack is very simple, because the password can only include a few digits as it must be entered through the phone keypad in normal use. That would take a few seconds at most.

@Ed Said- "So why do they need the software if they can read the data? "

Because the software requested to help the FBI ONLY disarms the 'auto-distruct' feature (if the entry code is tried unsuccessfully 10 times).

The actual DATA of importance to the FBI is still encrypted on the phone.

The FBI (not Apple) will then force-enter random codes, until they 'get in'… once 'in (with the finally correct code), the data is unencrypted, and is readable…

I hope this explanation helps you understand.

In any event, the FBI IS asking (indirectly) for the technique to access ANY iPhone with this yet-to-be-created software demanded of Apple… a terrible thought, that must be resisted.

Any information about co conspirators or contacts on that phone will be out of date, by the time the Government and Apple end their dispute. A dispute that clearly won't be settled until it reaches the Supreme Court. That will take months. Months that will give anyone on that phone to disappear, if they hadn't already.

This is a gun grab by the Feds nothing more.

@RSD But they still wouldn't have the password, so they would still have to brute force it as you say, just on the phone. That is the only reason why the 10 strikes and your out is a problem for them. Also, on the phone they would have to try manually, but off the phone they could automate the attack, and it would be over in seconds.

To be able to bypass the password to get software onto the phone at the hardware level is more work that reading data off the phone, since you have to be precise about where you put it so you don't over-write something you want. If you are just reading, you read it all and figure out which bytes you want off the hardware.

Of course they could ask for Apple to build a back door into the encryption, not just defeat the 10 tries limit. But wait, Apple already did, they just didn't keep a key for it. Of course, the county didn't use that feature, but it is exactly how (good) corporate IT departments can unlock a phone when the user forgets their password.